[…] Traduzione autorizzata tratta dal post originale di Tom Kuhlmann sul “Rapid E-Learning Blog”. Il post originale è disponibile qui […]

3 Simple Ways to Measure the Success of Your E-Learning

July 19th, 2011

Whenever I travel I like to spend some time hanging out with blog readers to answer questions. At a recent session someone asked, “How do I measure the success of my e-learning?”

That’s kind of a tricky question. While we may all use words like “e-learning” we don’t always mean the same thing. On top of that, just because it’s built with an e-learning tool doesn’t mean that the output is really an e-learning course.

Generally we think e-learning is built to change behaviors or improve performance, but that’s not always the case. Many organizations use the rapid e-learning tools just to share information. There are also quite a few people who use the rapid e-learning tools because it allows them to quickly build multimedia content.

Since there are different reasons why people build “e-learning” courses, there are different ways to measure success. In a previous post, we looked at measuring return on investment. Today we’ll review a few reasons why some are building courses and look at how they can be evaluated.

Performance Improvement

Building e-learning courses is not usually the organization’s business goal. E-learning is merely a solution that helps meet a business goal. Understanding that is important. I’ve worked on plenty of projects where producing the course was considered the success; but we never tracked if the course itself produced any meaningful results.

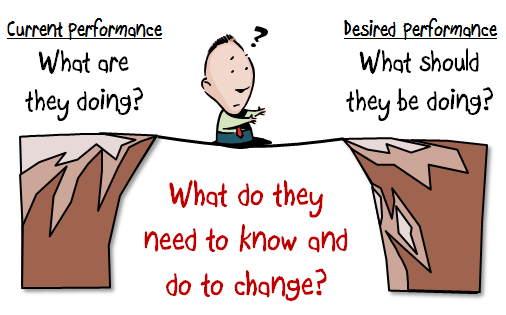

The trick is trying to figure out the real goal and how the e-learning course helps meet it. In a simple sense, the client is at Point A and they want to get to Point B. So the expectation is that your course is going to help them get there. Thus the measure of success isn’t that you have a course. Instead the measurement is how close you are to Point B.

Let’s assume that they’ve done all of the analysis and an e-learning course is the correct solution (a big assumption). They are using some metric that identifies where they’re currently at. And they’ll use that metric to determine if they’ve met their goals. You’ll use that same metric to determine your success.

If the client says we need to increase sales by 10%, your metric is increased sales. However, this is where it can get a bit tricky. Most times you can’t control a 10% sales increase because there are so many other factors involved. In that case you can set different metrics.

For example, in the past only 50% of the people took the training. Getting more exposure to the correct information is critical. A metric could be that 100% get the training. It’s not going to guarantee that you hit 10% sales, but it does guarantee that you’ve delivered the training, which is one piece of the puzzle.

Another metric might be to do some sort of pre/post assessment to determine what they can do currently and then how well they can do it after the course. Again, you may not be able to guarantee a 10% sales increase, but you can state that after the training they were able to make the types of decisions and perform in a way that demonstrated the level of understanding they needed.

This is why building courses that are connected to performance expectations is critical. It’s less about giving them information and more about how they use the information to make decisions. This post on pull versus push training gives you some insight into what that entails.

Organizational Compliance

While we may not want to admit it, there’s a lot of e-learning that’s kind of pointless from an instructional perspective. But it exists because the organization wants to show they delivered the training or met some regulatory and compliance guidelines.

I used to joke that instead of creating courses on how to be ethical, they should be teaching unethical people how to not get caught. It seems we don’t care much about ethics until it makes news. So the goal is really keeping them out of the news. It’s not like the folks at News Corps are saying things would be different if only they had that anti-wiretapping e-learning course.

I once met with our chief legal counsel who basically said he didn’t care what the course did as long as he could show that we offered the training. In that case a performance metric didn’t meet his needs.

If the compliance training isn’t tied to measurable business goals, you’ll need to find some other metrics such as how many completed the course. You could also do some sort of pre/post assessment to measure their understanding of the topics.

Sometimes it’s easier to measure your efficiency. For example, last year all of the compliance training was delivered in a classroom. This year it was delivered via e-learning. Compare the time spent in class to the cost of online delivery. It’s not going to tell you if any behavior has changed, but it will tell the organization that you’ve cut costs and become more efficient. And that counts for something.

There are purists who will rant and rave about how this isn’t real e-learning and we shouldn’t build courses like this. But it is what it is. I’d rather be an employed e-learning compromiser than an unemployed e-learning purist.

Sharing Information

Outside of performance improvement and compliance training, the most frequent use of rapid e-learning software is to share information. While the organizations may label it e-learning, to me it’s really more like a newspaper or website sharing news. The information is good to know and plays a role in things, it’s just that there’s no real performance expectation tied to it.

Some would say that in those cases they should just use a web page or create some sort of simple job aid or document. But what they miss is that people want to leverage the multimedia capabilities of the software. And besides, the rapid e-learning tools are so easy to use there’s not much of a difference in production time between creating a job aid and converting a PowerPoint file to Flash.

If sharing information is the goal, then the key metric would be to see how many people actually viewed the information. If you have some expectation for them to do something like download more info or visit a link, you can track hits to the link or the number of downloads. If that is your goal, then you may also want to employ some of the landing page strategies that are used to entice traffic to web sites. Those strategies could help meet your goal of getting exposure.

So there you have it, three simple ideas to help you get started measuring the success of your e-learning courses whether you’re seeking to change behaviors or just share information.

The key in all of this is knowing how to contribute to the organization’s success. Sometimes e-learning isn’t the right solution and sometimes it’s the best. I’ve recommended the Performance Consulting book in the past. It’s a good one to help you think more about focusing on the right goals. If you want to think a bit more strategically about where you fit in the organization and how you can make a meaningful impact, Running Training Like a Business, is a good read.

What types of courses do you build? And what are you doing to measure their success? Share your thoughts by clicking on the comments link.

Events

- Everyday. Check out the weekly training webinars to learn more about Rise, Storyline, and instructional design.

Free E-Learning Resources

|

|

|

|

Want to learn more? Check out these articles and free resources in the community. |

Here’s a great job board for e-learning, instructional design, and training jobs |

Participate in the weekly e-learning challenges to sharpen your skills |

|

|

|

|

Get your free PowerPoint templates and free graphics & stock images. |

Lots of cool e-learning examples to check out and find inspiration. |

Getting Started? This e-learning 101 series and the free e-books will help. |

15 responses to “3 Simple Ways to Measure the Success of Your E-Learning”

Leggi la traduzione (autorizzata) in italiano di questo post qui:

[…] See original here: 3 Simple Ways to Measure the Success of Your E-Learning » The Rapid eLearning Blog […]

Thank you for acknowledging the reality of some compliance training. While I wish all training was performance based and done for a real business need, being in compliance is a real business need – so as long as regulatory bodies ask about the number of people that got trained, part of our job will be creating “check the box” compliance training. I just hope it’s never the whole job.

I laughed out loud several times as I read this article! Particularly the section on Organization Compliance! So true!

My supervisors judge my success on the number of modules I’m able to produce. . .I doubt many of them have ever opened one up or could pick my work out of an eLearning lineup. (I tell myself that’s because they trust me – LOL!)

As to MY method of measuring success, I can’t quantify it, but when a sales, support, or implementation person come to me and want to know where “that thing you did on (whatever) is located – my customer needs it”, or a classroom instructor tells me that the clients who went through the eLearning before the class are much easier to teach, I figure I’ve done something right.

Please never stop this blog – I look forward to it every week!

This article has really helped answer many of my questions about a course that I am currently building for my company. It is a course that is an “Annual Refresher” and the employees do not have access to their own computers, so it must be facilitated in a group setting. And, because it is a “Refresher” and most employees have been at the company for 10+ years, so the information is not new to them – regardless how creative I get. The purpose of this course is to just meet compliance requirements and to ensure that most (90% or more) employees attend and understand this training. But, after reviewing all of the awesome e-learning courses that are available here, I felt somewhat discouraged because my course is a PowerPoint with audio recording that people can watch at their convenience. However, my own personal success is that I didn’t use any bulletpoints, and I used many, many graphics (which is a huge improvement for me :)). Now, after reading your article, I feel like I am on the right track with this presentation and I don’t feel discouraged that it may not be “award-winning”. I really feel like I am meeting the purpose with this course and creating it for the audience (of which most English is a 2nd language – so I simplified the text and added tons of graphics). Thanks Tom for your inspiration and thanks for this article.

Today’s topic was very germane to the “Trainer’s dilemma” and worth spending some more time on. To answer any of the three scenarios, the first thing must be a complete understanding of the challenge. Many trainers who I’ve known are always concerned about their relevance to the organization, and about being busy. Often any performance problem is “Always a training problem”, and many courses are developed and paid for only to have the client lament that it cost too much and the performance didn’t change.

It takes a lot of organizational courage to speak knowledge to power and tell folks that training may not be the answer and that some other intervention is necessary. While it may seem very scary at the time, the results are often far more gratifying because it establishes the training person as a performance engineer, not just a classroom performer.

Tom Gilbert wrote a seminal book in the 70s about Human Performance Engineering which spoke to really understanding what performance was really desired and what was missing in the pre-training performance. Then specific interventions could be tailored to improve those specific shortcomings, a la a golf instructor improving your swing after viewing a slo-mo video.

Having been in this business for generations, I have seen it all; the courses to appease the corporate psyche, the mis-guided programs that produced nothing, and the smash hits that directly affected the targeted behavior. Sometimes you luck out, but usually it’s the pre-work, devloping the deep understanding of where you want to go, that produces the best results.

In a previous employer, the JD Powers scores were dropping. We were lucky and had customer evaluations, and we had some parts of the company that were getting perfect scores. We analyzed what the good groups did to get their high scores and then based the training on those specific behaviors. The program was a smash hit and the JD Powers scored went up. That is a good example of the “best case scenario”.

After spending 35 years of my life building, delivering and (sometimes effectively) measuring the true impact of classroom and elearing I would like to recommend, for your expert consideration, a robust and comprehensive learning model for all of us to follow and improve our outcomes. I have no financial interest in this book or company – just find it to be the most robust model on learning design – yet.

It’s Called: The Six Disciplines of Breakthrough Learning: How to Turn Training and Development Into Business Results

Notable Reviews:

“All the training in the world does not mean a thing, unless there is true transfer! The Six Disciplines is a jewel, loaded with practical perspectives on creating true ROI from learning investments.”

—Elliott Masie, CEO, The MASIE Center’s Learning CONSORTIUM

“The Holy Grail for every corporate learning department today—and for the CEOs who critically depend on them—is sustained learning that drives performance. In this thorough and timely book, the authors show step-by-step how genuine learning can, at last, be found.”

—John Alexander, president, Center for Creative Leadership

“Six Disciplines is a timely book written by experienced authors to help learning and development professionals deliver results. With proven methods, presented in a logical style, this book is a must read for anyone interested in improving the impact of training and development.”

—Jack J. Phillips, chairman, ROI Institute, author of thirty books

“The Six Disciplines describes and illustrates six principles practiced by companies that earn the highest returns by efficiently converting learning into business results. A truly valuable book!”

—Ken Blanchard, coauthor, The One Minute Manager® and The Secret

@Tom, I love your getting from Point A to Point B.

In ASTD parlance, that’s called Gap Analysis, as you know, and it’s a tool I’ve used in the Analysis phase of ADDIE quite often. Performing a Gap Analysis has frequently resulted in e-learning courses that produce positive and measurable workplace performance results.

And, like others, I’ve worked on my fair share of Compliance courses!

@jenisecook

[…] 3 Simple Ways to Measure the Success of Your E-Learning […]

[…] 3 Simple Ways to Measure the Success of Your E-Learning: Everyone’s yacking about ROI, but here are three practical tips to wow your boss and co-workers. […]

I just to give a huge thank you to everyone for all of your tips, tricks, and ideas on how to create successful e-Learning. I submitted my e-Learning project to our Quality Team and they Loved It! I was so nervous because we have always facilitated live classes for the Annual Compliance Training. This is the first year that we are doing a pre-recorded session for the employees in the plant. I am so excited and wanted to share….we need a “bragging” blog 🙂 And, this is also the first powerpoint where I steered away from using bullet points and instead tried to add simple animations and “good” images to make clear and concise points. Thanks again to everyone for all your help. What e-Learning course should I tackle next…..?

[…] post “3 Simple ways to measure the success of your e-learning” has been a big help this week as I recast my Slideshare presentation on the innovativeness of […]

[…] 3 Simple Ways to Measure the Success of Your E-Learning […]

0

comments